Since data management covers both reading and manipulation of data, there’s always a need to have an audit trail. It is necessary because, not only so we have an easy option of restoring the data or checking its timeline, but also to encourage administrators and clients to responsibly use the data at their disposal. Database auditing covers simple practices that make this possible.

Transactional databases are integral part of nearly every business. They allow for real-time data management related to everyday’s topics; employees, clients, banking transactions and similar entities.

Since data management covers both reading of data and their manipulation (CRUD – Create Read Update Delete). There’s always a need to have an audit trail, not only so we always have an easy option of restoring data or checking its timeline, but also to encourage administrators and clients to responsibly use the data at their disposal.

For similar reasons, it’s also desirable to track database schema changes. While it shouldn’t be used for versioning, it enables quick look into schema changes and people involved, again to encourage developers and administrators to responsibly manipulate database schema.

Both schema and data auditing will be explained for SQL Server platform.

Schema Auditing

Schema auditing is very simple to implement and doesn’t use significant amount of resources, so it’s recommended to be used in any serious database. One thing to note is that some deployment tools turn off DDL triggers by default and deployment changes should also be tracked.

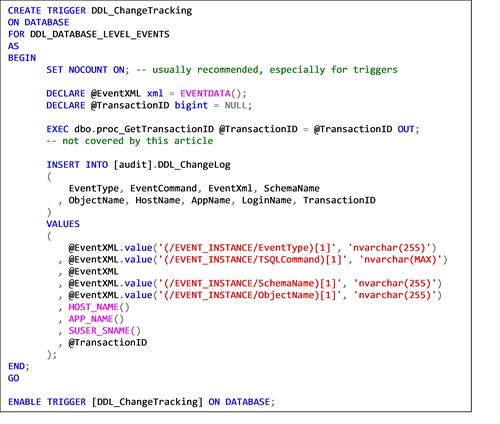

Implementation is done using database-wide DDL trigger, which can only behave as an “after” trigger, meaning the operation happens during the same transaction, but after the change was saved.

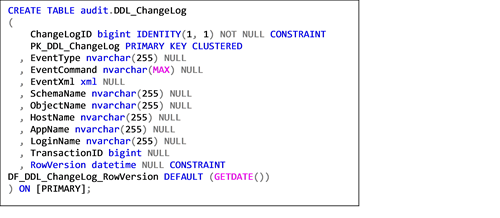

Schema of the auditing table is solely dependent on the business requirements, but generally recommended attributes would be:

- ChangeLogID – surrogate key used as primary key for the auditing table

- EventType – describes triggering event

- EventCommand – tracks DDL query used to change schema

- EventXML – resultset of EVENTDATA() function which returns details about the event inside XML format; function returns data only inside of DDL or logon trigger

- SchemaName

- ObjectName

- HostName – name of the host from which the command was executed

- AppName – application used to execute the statement

- TransactionID – requires advanced permissions or signature, recommended for implementation by experienced administrators

- RowVersion – preferably datetime

Code for creation of schema audit is very simple and should be self-explanatory. TransactionID is included just to hint at that option, but it’s probably unnecessary for most auditing solutions.

Data Auditing

While not too complicated to implement, data auditing requires continuous maintenance which schema auditing doesn’t; at least for the implementation covered by this article.

Since shadow table should have the same schema as the source table, any column changes done on the source table will need to be done on the shadow table and trigger. Forgetting to do so might render the table unusable as well as any related code. Especially, if the trigger uses “SELECT *” to insert the data into shadow table.

While “SELECT *” should generally be avoided, in this implementation it might be preferable since any omitted changes of shadow table will cause trigger to fail during development (or QA) and force the developer to change the shadow table as well, thus having the whole table covered by the auditing solution. Nuisances during development are likely better option than noticing specific columns weren’t being audited for months.

Removal of columns might cause issues with “SELECT *” if the column history must be preserved, but there’s rarely a valid reason to remove a column and keep its history so there’s no need to cover this in depth.

Recommended columns for data auditing would be:

- AuditID – surrogate primary key

- AuditDate – datetime column used to track time of change

- AuditUser – tracks which database user changed the data; preferably SUSER_SNAME() or ORIGINAL_LOGIN()

- multiple application users using the same database user will render this column practically useless, so additional layer of auditing via application might be required

- AuditAppName – can help to identify is the change was done via custom application or via SSMS

- AuditAction – (I,U,D) should be enough

- if looking to save space we could use shadow table only to track previous row version and count on the latest row version to be in the source table; might make history querying harder which again shows there’s no universal approach to data auditing but it largely depends on business and hardware limitations

- All columns from source table

- To use “SELECT *” auditing columns should be at the beginning of shadow table, to allow addition of new columns at the end of the source and shadow table

Reader should keep in mind that introducing data auditing solution practically doubles all data changing operations, so it is strongly advised to consider hardware limitations and/or selective auditing.

Since business logic can change over time it’s recommended to consider not copying constraints (especially not foreign keys) to shadow table and leave all audit columns nullable. Validations on source table should be more than enough; auditing solution is intended to track data history, not to enforce various business requirements. Not copying constraints will also help to avoid slowing down insert operations into shadow table. Also, another thing to consider is trigger order, if source tables already have active triggers, without explicitly setting auditing trigger as last, order of trigger execution is not strictly set.

.png.aspx?width=500&height=308)

.png.aspx?width=500&height=375)

Tags: auditing, data management, database, database auditing, SQL Server